Fix The Right Vulnerabilities, Fast.

Actionable. Intelligent. End-to-end.

You don’t need another scanner. You need a solution that acts on vulnerabilities for you.

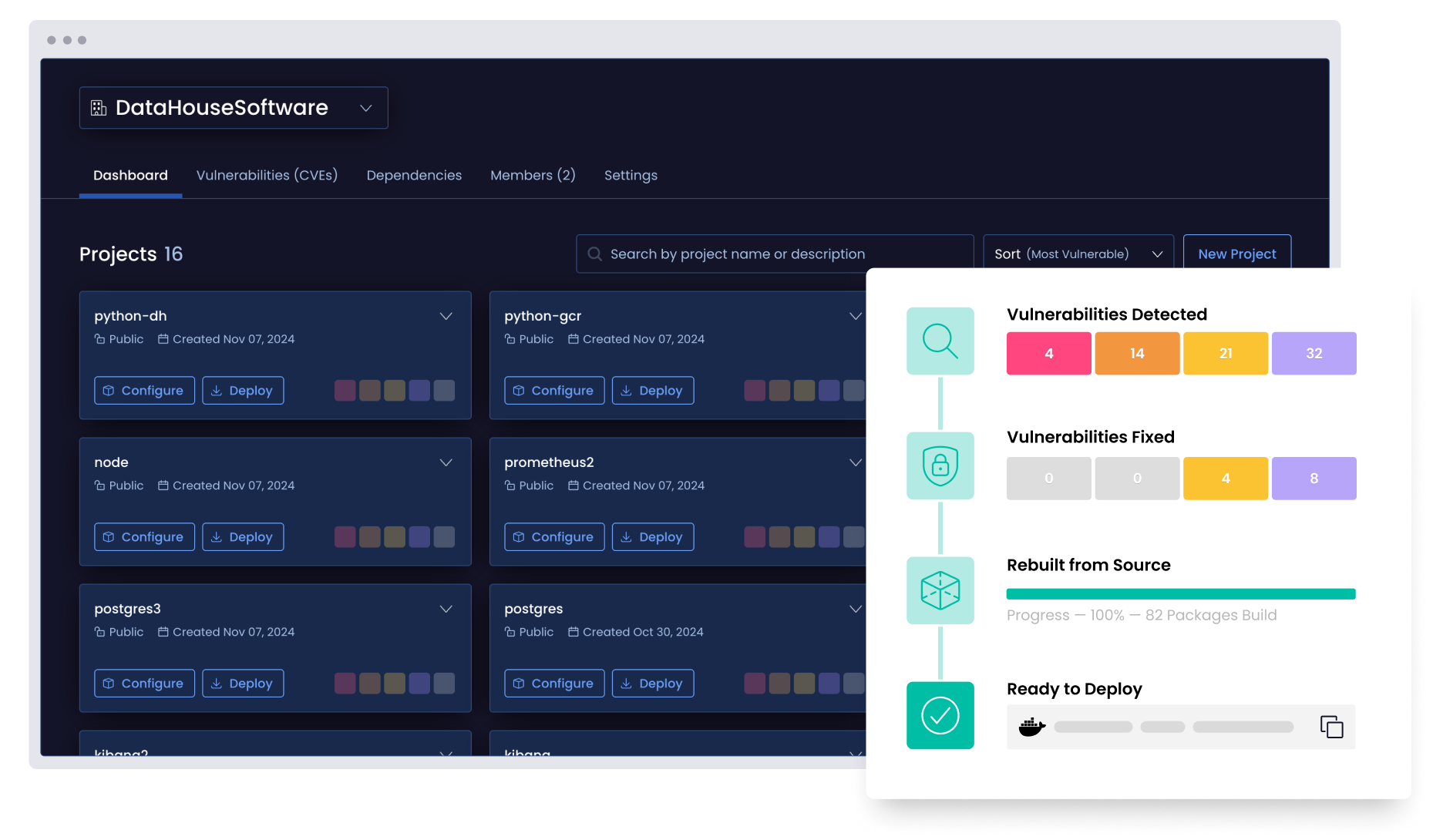

- Discover vulnerabilities across your full dependency graph

- Prioritize what matters with AI-driven breaking change impact reports

- Apply and deploy secure fixes automatically, directly from source

“I don’t have to think too much about security and the complications anymore because ActiveState does it for me.”

– Stacy Leon, Sr. Technical Specialist

Stop vulnerabilities before they stop you

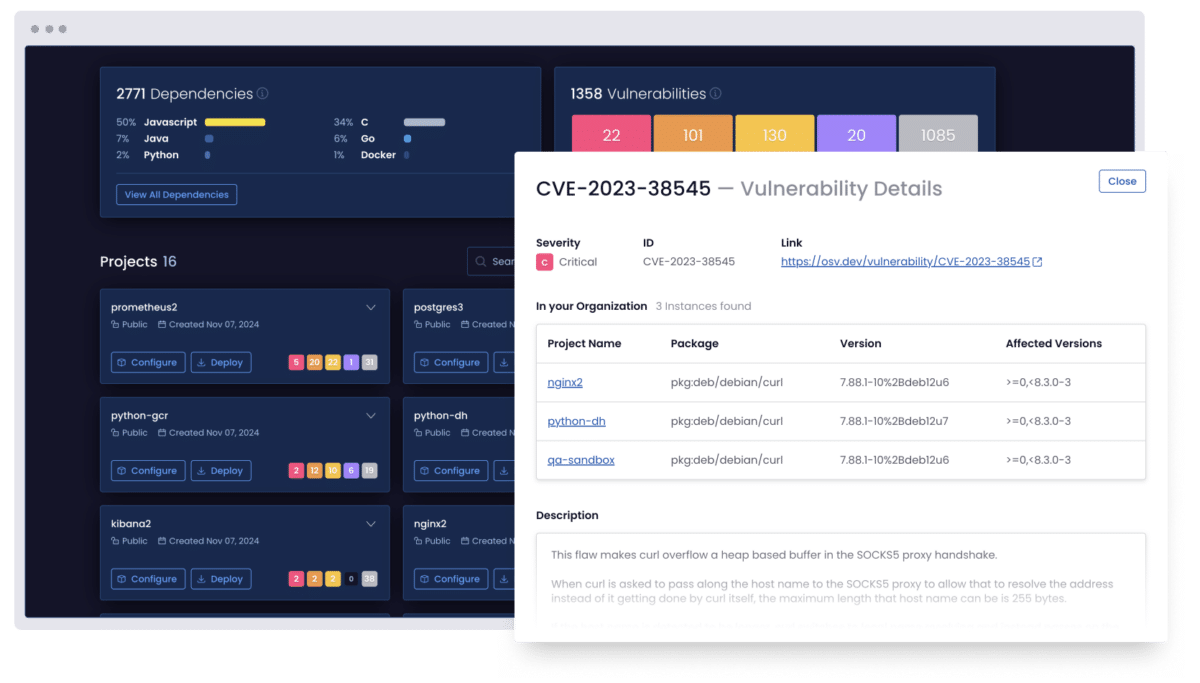

Map your risk

Reveal every vulnerability in your dependency graph, including transitive and nested issues. More importantly, visualize your vulnerabilities’ full blast radius.

Prioritize what actually matters

Our AI-powered engine evaluates exploitability, business impact, and breaking changes to capture only the risks worth your time.

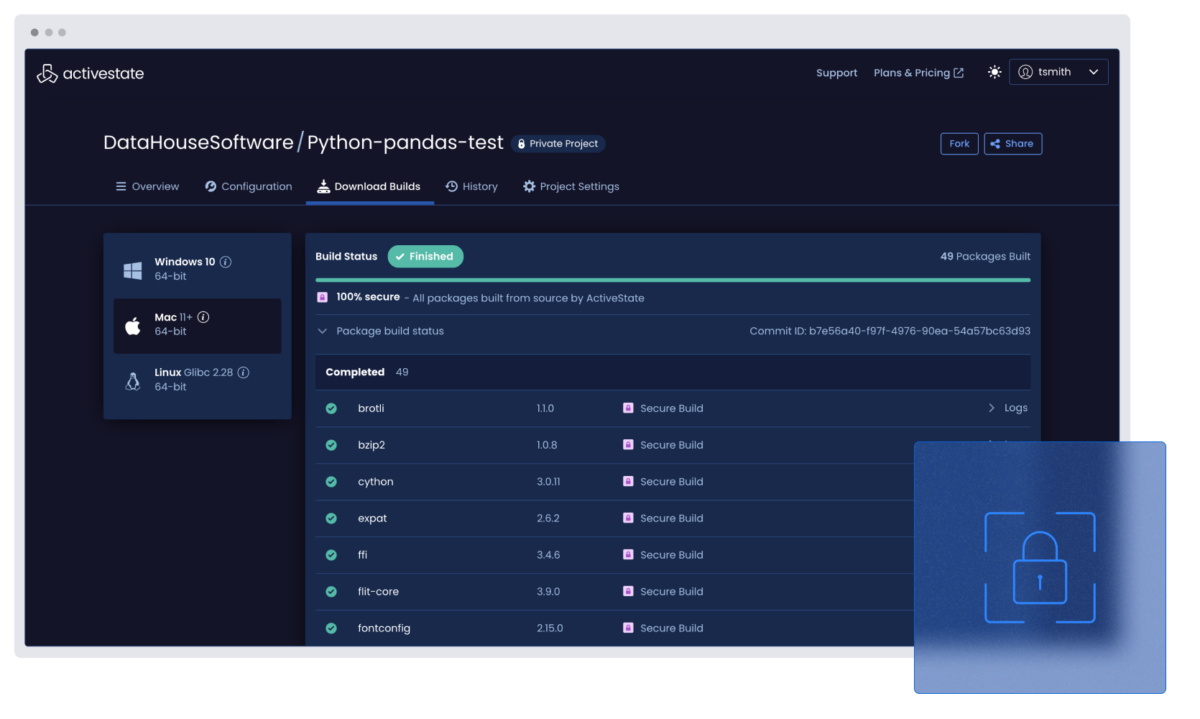

Remediate with confidence

Secure fixes are applied directly within ActiveState using secure build best practices. No guesswork, and no regressions.

Experience the ActiveState platform in action

Find out how intelligent remediation helps you go beyond alerts and actually fixes open source vulnerabilities faster.

In your demo, you’ll learn how to:

- Discover and prioritize vulnerabilities using AI and breaking change impact analysis

- Remediate issues automatically with secure, build-from-source fixes

- Reduce backlog and risk without slowing down your pipeline

- Discover and prioritize vulnerabilities using AI and breaking change impact analysis

FAQs

How is ActiveState different from a traditional vulnerability scanner?

Vulnerability scanners simply alert you after an application has been deployed. The ActiveState platform takes a preventative approach, and its vetted catalog of open source components helps block risky direct and transitive dependencies from entering your environment in the first place. It also digs deeper than most tools, analyzing down to the C library level to uncover issues others might miss.

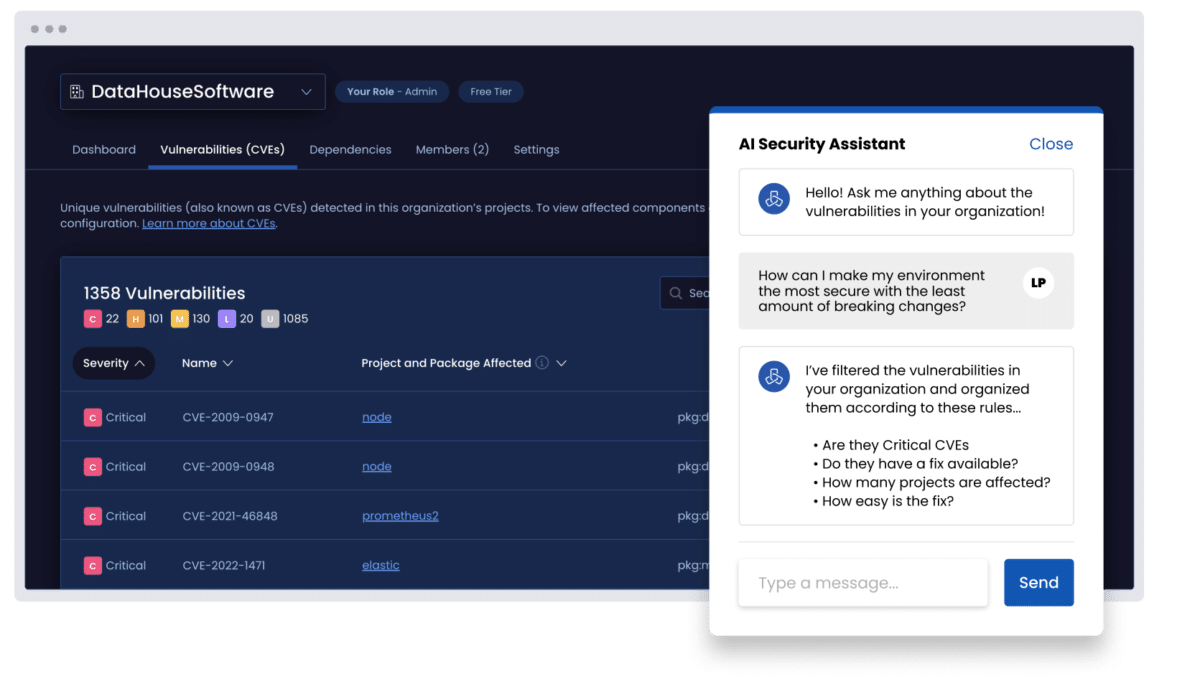

How does AI help prioritize vulnerabilities?

Our AI evaluates exploitability, breaking change risk, and business impact so you can focus only on the vulnerabilities that actually matter.

The platform shows which projects are affected, how widespread a vulnerability is, and what impact remediation will have to your application, including potential breaking changes. It also estimates the effort required to fix each issue, helping you focus on vulnerabilities that pose the greatest risk with the least disruption.

Can I fix open source vulnerabilities directly in the platform?

Yes. The ActiveState platform applies secure fixes directly within your build pipeline. No need for external patching tools or developer guesswork!

What’s included in a vulnerability blast radius report?

Our Vulnerability Blast Radius feature maps each vulnerability across your full dependency graph, including transitive and nested packages, so you see the true scope of impact before taking action.

Explore more resources

Why VMaaS Is Important for Your Enterprise Cybersecurity Strategy

ActiveState’s VMaaS solution delivers the last mile of vulnerability management through risk prioritization, precision remediation, and expert guidance. Here’s why it’s important to your enterprise cybersecurity strategy.

The 2025 State of Vulnerability Management and Remediation Report

Open source powers everything. Our latest report provides a candid look into how organizations manage vulnerabilities and remediation, and why traditional tools are no longer enough to tackle vulnerability remediation.

What is VMaaS? Understanding Vulnerability Management as a Service

Does it feel like your DevSecOps teams are constantly dodging cybersecurity threats? It’s a frustrating reality for many. Explore why opting for security-as-a-service can help your team overcome these mounting challenges.