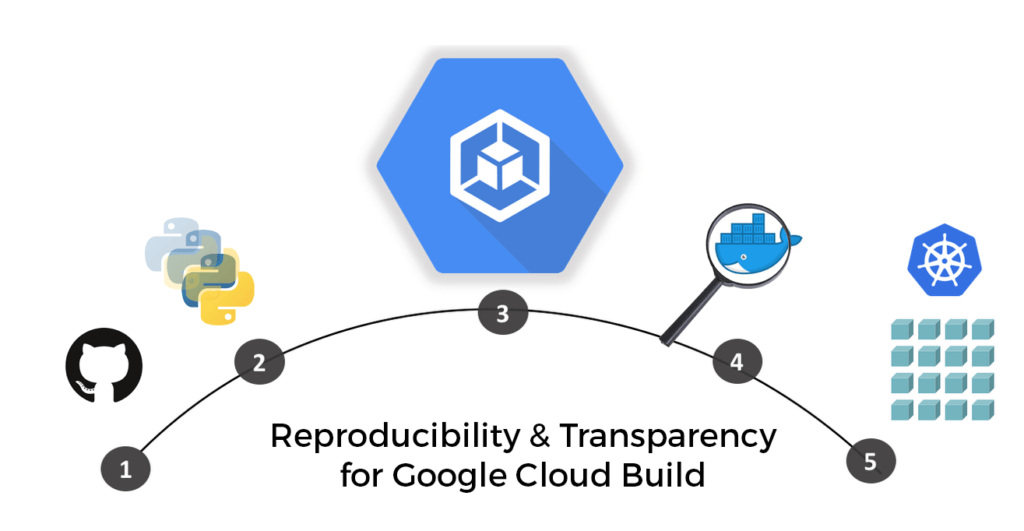

In the world of “cloud native” technologies and solutions, one of the most important technologies is the container. When you’re discussing containers, it is almost impossible not to mention Docker and Kubernetes. Both of these popular open-source technologies have contributed extensively to the cloudification of IT infrastructure and there are specialized cloud-native software solutions ecosystems that have developed around them.

When it comes to CI/CD, most vendors support containers as an option to either contain the base execution environment, or as a way of storing the artifacts created at the end of the pipeline, but Google Cloud Build (GCB) is a “container-native” product which is literally built on top of a container pipeline.

My previous posts on optimizing CI/CD pipelines using ActiveState tooling dealt primarily with VM-based CI/CD environments. As a container-only solution, GCB takes advantage of Google’s Cloud infrastructure to execute builds faster and cheaper. In both cases, the benefits of the ActiveState tooling remain the same:

- Reproducibility – a consistent runtime environment that ensures all dependencies can be accounted for, pinned, and can be reproduced on demand (especially relevant given GCB’s reliance on ephemeral containers).

- Provenance – all runtime artifacts are built from source, providing transparency into the open source supply chain, and traceability of production workloads back to the original packages and commits.

The result is a more secure and compliant Google Cloud Build CI/CD pipeline. This post will walk you through the steps of creating a GCB pipeline that incorporates the ActiveState Platform so you can see the benefits for yourself.

Google Cloud Build’s Containerized Pipeline

Google has gone all-in on container technology when building its Google Cloud Platform, so it’s no surprise that its CI/CD offering leverages containers, as well. But instead of using containers to provide an isolated environment within which to execute CI build scripts, GCB actually executes each step (or command) of these scripts in a separate container, taking containerization to the extreme. This enables each step to be completely self-contained, and therefore parallelized, making GCB builds extremely fast. This kind of isolation prevents the side effects of any one step from contaminating the following ones, and makes artifact-passing and messaging between steps explicit. The downside is it requires more configuration on the part of the user, and can make script creation more difficult. But once the concepts are clear, it provides a safer and more stable environment for the CI jobs to run.

GCB provides a number of pre-containerized build steps called “Cloud Builders” that can be called from a build configuration file, and chained together to create a build pipeline. Cloud Builders can contain languages as well as tools, allowing you to do language setup and dependency management. It’s also possible to access container registries (Google’s own Google Container Registry, as well as it’s cached copy of DockerHub) to pull any type of pre-built container for use in build steps. Be aware that there is one limitation of GCB in that it only officially supports Linux.

So, without further ado, let’s set up a Python runtime environment in Google Cloud Build and execute some tests in a containerized CI pipeline.

Getting Started with Google Cloud Build

Once again, I’ll be using a sample application (the same one I used in my previous CI/CD blog posts) written in Python and hosted on GitHub. GitHub will be our source of truth for the code, and we’ll use the ActiveState Platform as the source of truth for the runtime environment, which includes a version of Python and all the packages the GitHub project requires (note that the process here is just as valid for Perl projects/runtimes, as well). GCB will grab the runtime from the ActiveState Platform and the source code from GitHub, and then build and run its tests for a successful round of development iteration.

GCB uses triggers from GitHub to kick off its builds, so we’ll set it up on our GitHub project. It is also possible to kick off builds manually using Google’s gcloud CLI, but for the sake of simplicity we’ll stick with the GitHub triggers for this blog post. As we’ll be using a community-created Cloud Builder, it would be best to follow the instructions here to make sure you’re ready to go.

First things first:

- Sign up for a Google Cloud account, if you don’t have one already. You can also get free build minutes (120 per day as of this writing) to use with Cloud Build.

- Sign up for Secret Manager, which is the Google Cloud service we’ll need to securely manage our authentication requirements.

- Sign up for a free ActiveState Platform account.

- Install the State Tool (the CLI for the ActiveState Platform) on Linux:

sh <(curl -q https://platform.www.activestate.com/dl/cli/install.sh) - Check out the runtime environment for this project located on the ActiveState Platform.

- Check out the project’s code base hosted on Github. Note that the source code project and the ActiveState Platform project are integrated using an activestate.yaml file located at the root folder of the Github code base. You can refer to instructions on how to create this file and learn more about how it works in the ActiveState Platform docs here.

All set? Let’s dive into the details.

Integrating Google Cloud Build with GitHub

Log into your Google Cloud account, and then do the following:

- Create a project or select an existing one for the purposes of using GCB.

- Enable billing for the project.

- Enable the Cloud Build API for your project.

- Enable Google Cloud’s Secret Manager API. We’ll be using secrets in our project to authenticate with the ActiveState Platform.

- Assign yourself the necessary permissions to create and access secrets.

With the prerequisites out of the way, we can now start creating the trigger that will kick off the build workflow from GitHub. We’ll be targeting a Linux build for this integration since GCB only supports Linux builds at the moment.

On Github:

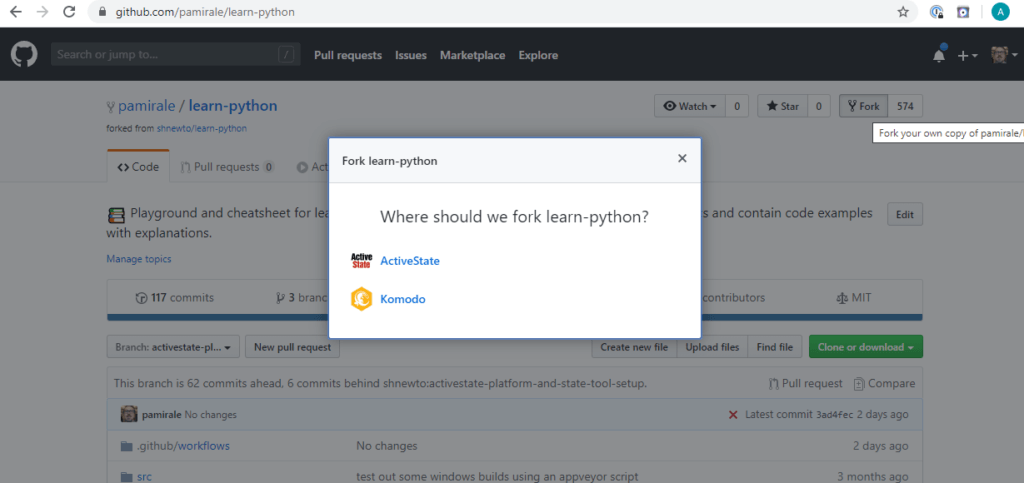

1. Sign into GitHub.

2. Fork the learn-python project into your account.

3. In order to tie your project in Github to GCB, you’ll need to create a build trigger. Using triggers will help run your builds automatically when a git push or pull request is detected. To keep things simple, we’ll use GitHub App triggers.

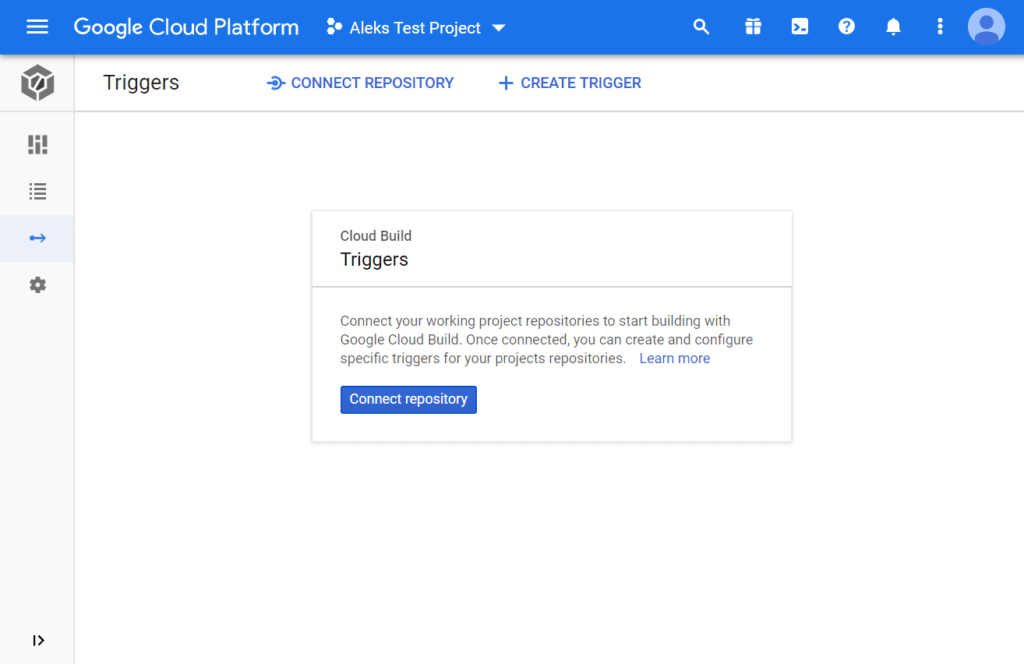

Open the Triggers page in the Google Cloud Console:

4. Choose your Google Cloud project in the top bar and click the Connect Repository button.

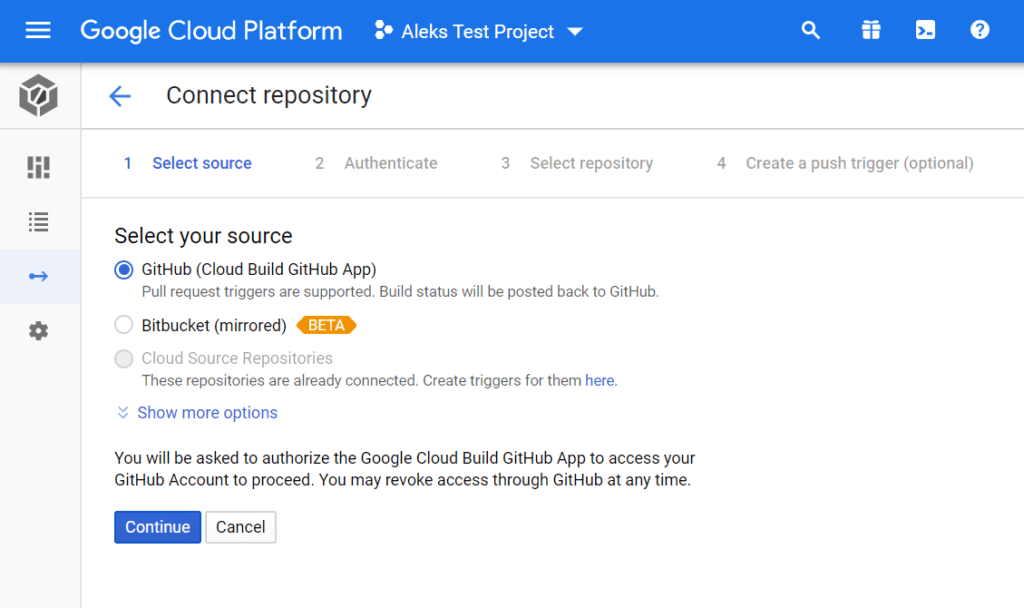

5. Select “GitHub (Cloud Build GitHub App)” and click the Continue button.

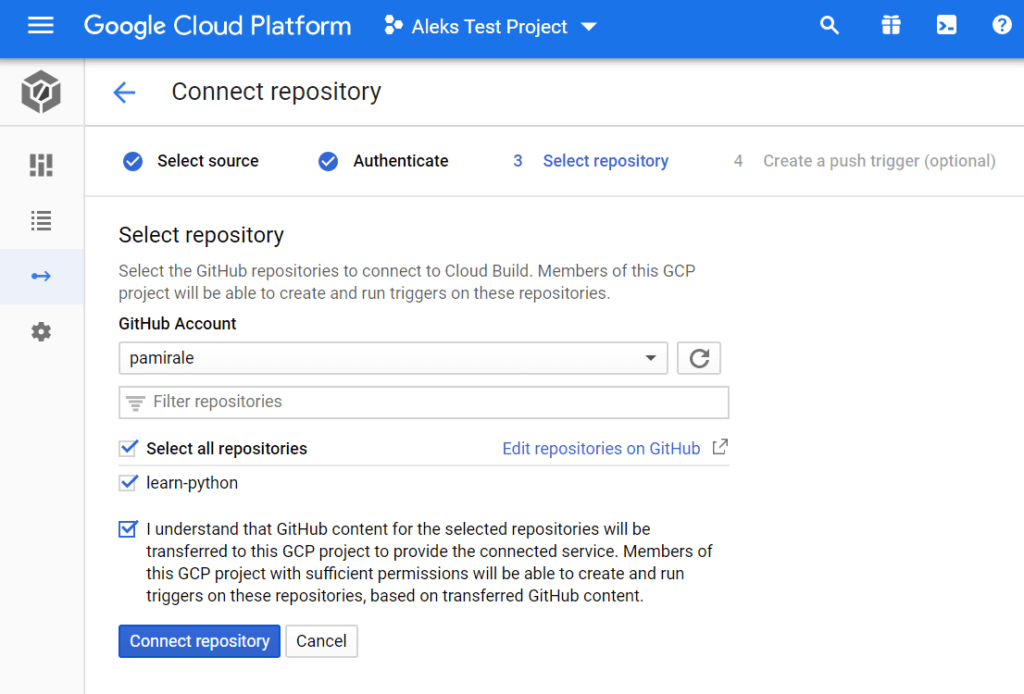

6. When prompted, provide authorization for Google Cloud Build, and then follow the steps to complete the installation of the Google Could build app on GitHub. Once you’re done, you’ll be returned to the repository selection on Google Cloud.

7. Google Cloud will pull in information from GitHub and display your projects. You’ll need to select the learn-python project that you forked previously, click the consent checkbox, and then click the Connect Repository button.

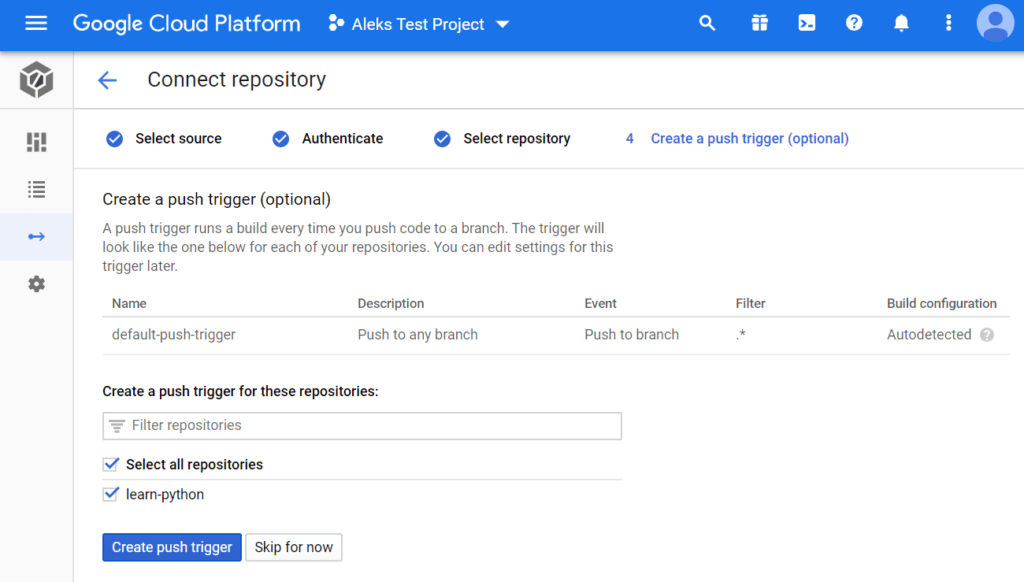

The next step in the workflow is to create a push trigger. You can do this by selecting your learn-python repo and clicking on the Create Push Trigger button.

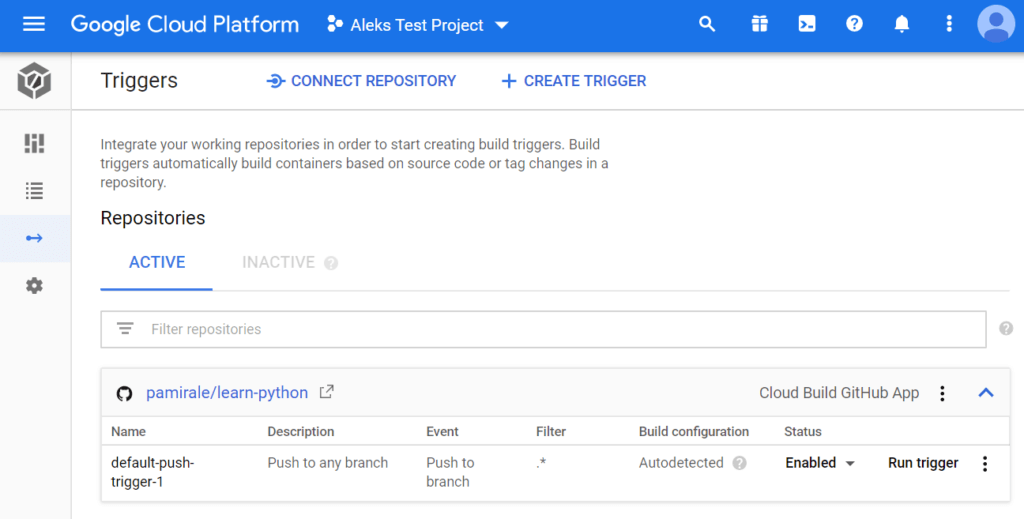

At this point, any pushes to your GitHub project will trigger a build on GCB. You can also click the Run Trigger button on this screen and select a branch to trigger the builds manually.

Google Cloud Build Pipeline Configuration

Now that we’ve hooked up GCB to GitHub, let’s look at the build pipeline configuration, which is comparatively simple. GCB pulls in your configuration from a standard DockerFile (for simpler builds) or a cloudbuild.yaml file located at the root of your source code folder. The .yaml file is very similar to the ones used in other CI/CD products, so we’ll use that in our example:

steps: - name: gcr.io/$PROJECT_ID/state args: ['state','auth'] - name: gcr.io/$PROJECT_ID/state args: ['state', 'deploy', 'shnewto/learn-python', '--path', '/workspace/.state'] - name: gcr.io/$PROJECT_ID/state args: ['pylint','src'] - name: gcr.io/$PROJECT_ID/state args: ['flake8','src','--statistics','--count'] - name: gcr.io/$PROJECT_ID/state args: ['pytest']

- Authentication – The ActiveState Platform project, which contains the runtime environment is a public project, and therefore requires no authentication. This step is included here to demonstrate how to access private projects. If you create a private project on the ActiveState Platform, you’ll need to create a secret for your credentials via Google’s Secret Manager, similar to the API key instructions below. If you’re using a public project, you can safely remove this step from the

cloudbuild.yamlfile. - Runtime Deployment – This step deploys the Python custom runtime configured for the Learn Python project into the build environment. More information about configuring this step for your custom projects can be found here.

- Code Analysis – This is a best practices step that runs a linter to look for programming errors and generally check the quality of the source code.

- Code Style – A second code analysis tool to check the code base against style guidelines.

- Testing – The pytest framework is invoked to run a simple test against the code.

Before making this configuration active and running any builds, there are a few remaining setup steps you’ll need to take in GCB:

- Integrate GCB with the ActiveState Platform using State Cloud Builder’s built-in authentication for Secret Manager.

- Deploy State Cloud Builder into the project.

Integrating Google Cloud Build with the ActiveState Platform

To call the ActiveState Platform from GCB, we’ll need to obtain an API key and set it as a secret using the following steps:

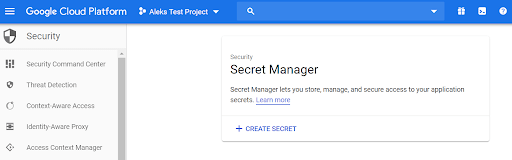

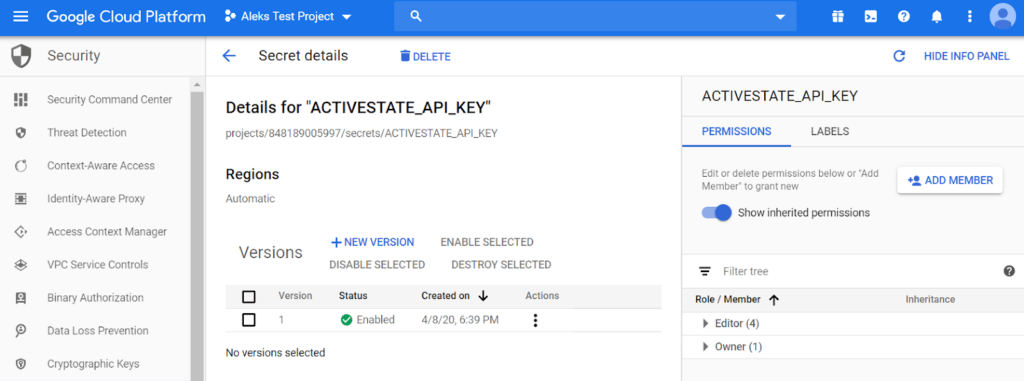

1. Go to Secret Manager in your Google Cloud project. This is a paid service that you need to explicitly enable before use.

2. Click “Create Secret.”

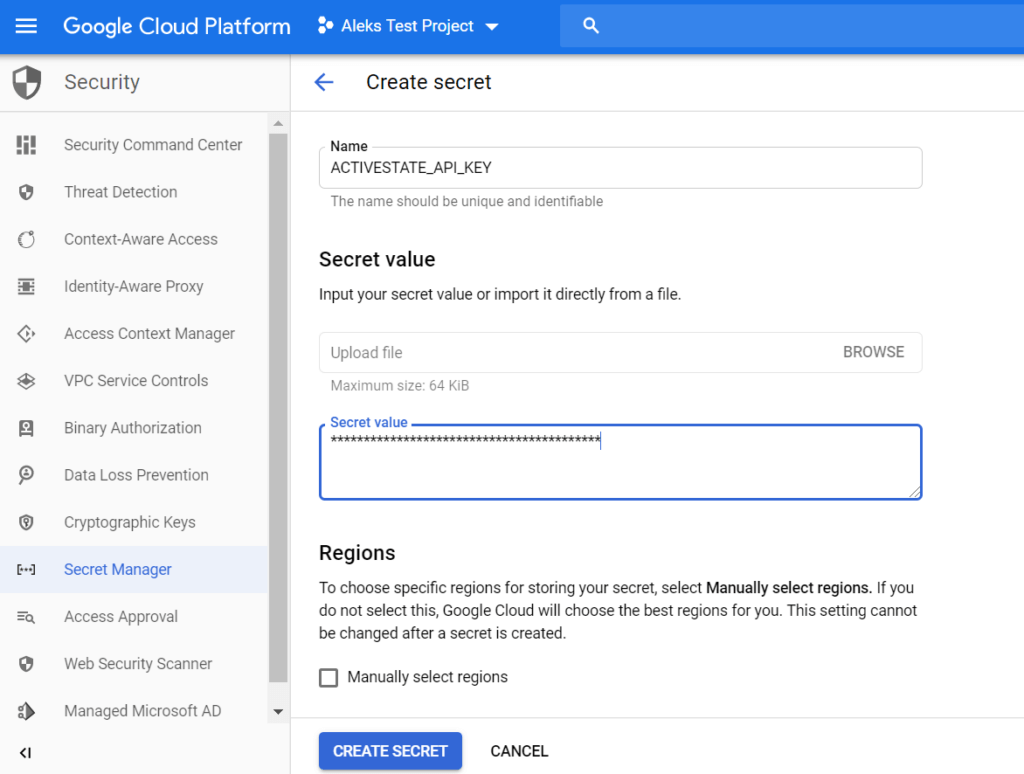

3. Type ACTIVESTATE_API_KEY as the name of your secret.

4. To obtain the “Secret value” (i.e., the actual API Key), first authenticate with the ActiveState Platform using your account credentials:

state auth --username <yourname> --password <yourpassword>

5. And then run the following command:

state export new-api-key APIKeyForCI

The value printed is your API key. Don’t close your terminal, as you’ll need it again shortly.

6. Copy the API Key value into the “Secret value” field and click the Create Secret button. You should now see your secret details and the “full path” to your secret (projects/<PROJECT ID>/secrets/<SECRET NAME>).

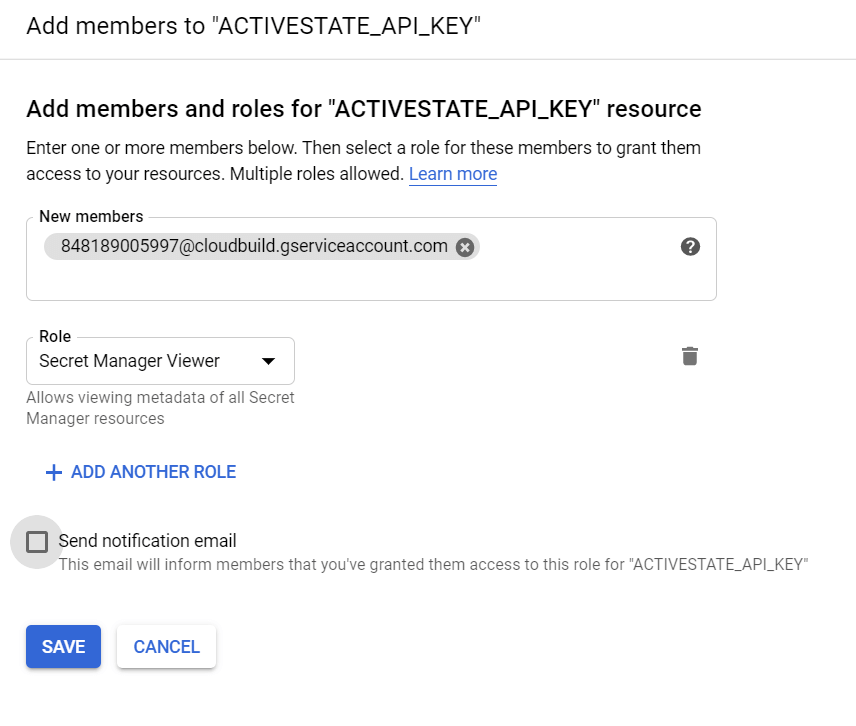

7. Click “Add Member” to give GCB permission to access this secret.

8. Type the GCB service account (<PROJECT_ID>@cloudbuild.gserviceaccount.com) in the “New members” field and then choose “Secret Manager Secret Accessor” as the Role. Click Save to finish.

This process is a bit more involved than other CI tools, which may be an artifact of how seriously Google takes security.

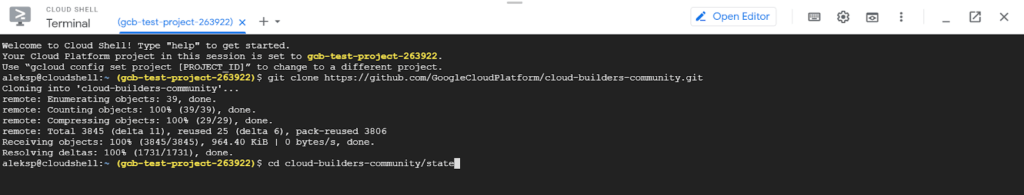

Finally, we’ll need to deploy State Cloud Builder in order to make it available to the project. These are basically a number of Linux terminal commands, that can either be run in your usual terminal, or else in Google Cloud’s terminal-in-a-browser Cloud Shell. We’ll use Cloud Shell here since it has all the prerequisites installed and configured:

1. You can install the gcloud CLI using the instructions here, or skip local installation and just use the Cloud Shell:

2. Clone the Cloud Builder Source from here:

git clone https://github.com/GoogleCloudPlatform/cloud-builders-community.git

3. Go to the State folder:

cd cloud-builders-community/state

4. Submit the builder into your Google Cloud project:

gcloud builds submit . --config=cloudbuild.yaml

Running a Build with Google Cloud Build

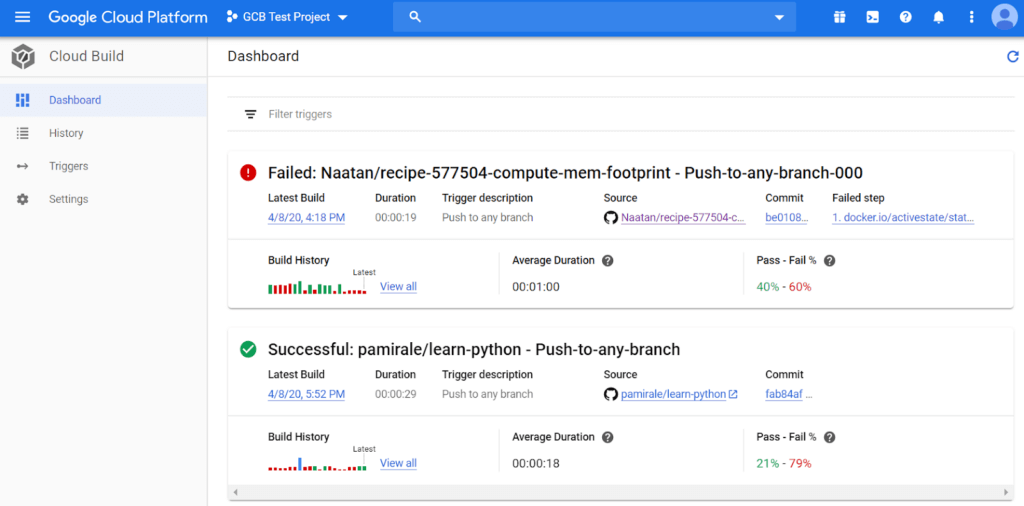

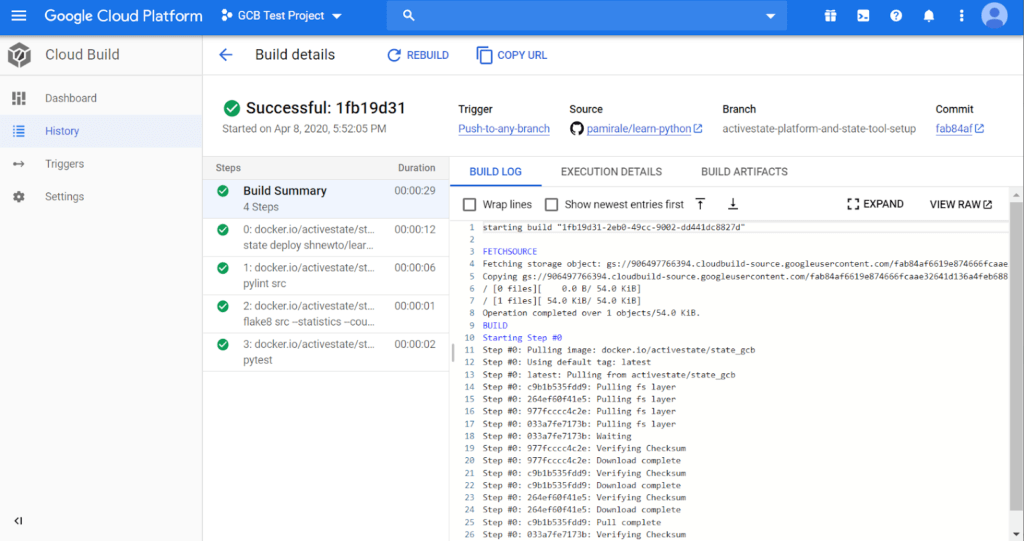

With everything set up, we can now trigger a build by making a small change to the GitHub project and committing it. You can see the results in the Google Cloud Build dashboard.

Click on the project name to see the log files and detailed results about the build.

You can click the Rebuild button to rerun a previous build on this screen, or click on Triggers in the left hand menu and click “Run Trigger” to manually run builds.

Conclusions

As a latecomer to the party, Google Cloud Build is still trying to catch up with the other CI/CD vendors. Single-product companies are further ahead in overall product functionality, but the Cloud vendors are trying to close the gap by playing to their strengths, which are close integration with their own ecosystems. Google has done a good job with GCB, which is strongly integrated with other services of the Google Cloud Platform. This makes a big difference in terms of security and stability, and GCB is second to none in these areas. Because GCB is built on the container infrastructure of Google Cloud Platform, it is rock solid and features pretty consistent performance. In my experience, build times on most platforms vary widely from run to run, but GCB builds usually take the same amount of time to complete.

The ramp-up to the Google Platform is quite a bit steeper than the other tools I’ve evaluated in my series of CI/CD blog posts, so small projects may not benefit as much as larger, more complex ones. But if you are already invested in the Google Cloud ecosystem, or have multiple projects that need reliable CI/CD, it’s definitely worth evaluating.

Combining GCB with a custom runtime environment built on the ActiveState Platform will increase the security and stability of your builds even further. The ActiveState Platform builds all the packages included in your runtime, plus their dependencies from vetted source code. Binary artifacts can be traced back to their original source in these builds, helping to resolve the build provenance issue all the way from the operating system itself (provided by GCB) through the container to the very contents of the language components.

And the ActiveState Platform, in conjunction with the State Tool, simplifies development workflow and CI/CD setup by providing a consistent, reproducible environment deployable with a single command to developer desktops, test instances and production systems. Simplifying CI/CD setup reduces training and maintenance time, but it’s the reproducibility that can provide the biggest benefit since it eliminates issues that arise from differences between build, development and production environments (a.k.a. the “works on my machine” problem) that DevOps often wrestles with.

As a result, the ActiveState Platform ensures your projects can be built (and rebuilt at any point in time) in a secure, consistent manner, while providing visibility into the CI/CD pipeline and improving the traceability of production workloads.

- If you’d like to try it out, sign up for a free ActiveState Platform account where you can create your own runtime environment and download the State Tool.

- Find out how your enterprise’s practice of CI/CD compare to other enterprise’s CI/CD practice? Our State of Enterprise CI/CD 2020 survey has concluded and the results are here. Find our CI/CD resources here.

Related Blogs: